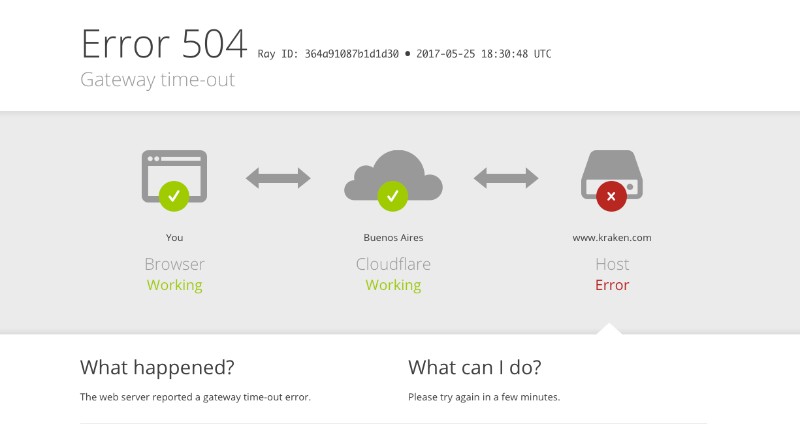

Yielded = (*exc_info) # type: ignoreįile "C:\Users\Ahmad Mustafa Anis\anaconda3\lib\site-packages\jupyterlab\handlers\extension_manager_handler.py", line 222, in getĮxtensions = yield _extensions()įile "C:\Users\Ahmad Mustafa Anis\anaconda3\lib\site-packages\tornado\gen.py", line 735, in runįile "C:\Users\Ahmad Mustafa Anis\anaconda3\lib\site-packages\jupyterlab\handlers\extension_manager_handler.py", line 92, in list_extensions HTTPServerRequest(protocol='http', host='localhost:8888', method='GET', uri='/lab/api/extensions?1596700656597', version='HTTP/1.1', remote_ip='::1')įile "C:\Users\Ahmad Mustafa Anis\anaconda3\lib\site-packages\tornado\web.py", line 1703, in _executeįile "C:\Users\Ahmad Mustafa Anis\anaconda3\lib\site-packages\tornado\gen.py", line 742, in run The amount of available memory for Jupyter should be larger by now, and you should be free of more memory problems).Could not determine jupyterlab build status without nodejs Exit the editor ensuring that you saved the changes.Īfter that, restart the Jupyter service by submitting the following command: service jupyter restart, and finally restart the machine (go to AI Notebooks in GCP and shut down, then start the AI Notebook instance). Substitute the MemoryHigh and MemoryMax by your calculated value. For example, if you want to set it to 16GB, your value to be set would be 17179869184.

In order to calculate the amount of GB you need to bytes, you need to multiply your desired amount by 1073741824. The default memory values in Jupyter are around 3.2GB in bytes. MemoryHigh=3533868160 MemoryMax=3583868160ĮxecStart=/bin/bash -login -c '/opt/conda/bin/jupyter lab -config=/home/jupyter/.jupyter/jupyter_notebook_config.py' The lines we are interested in are the two red bolded ones, that is MemoryHigh and MemoryMax. This will open a text editor of the Jupyter as a service settings (Jupyter in GCP AI Notebooks is installed as a service on a virtual machine). In GCP this is fairly easy via the AI Notebooks page, by picking the proper-sized machine type.Īfter ensuring that you have a proper amount of memory, open your Jupyter instance and Open a Terminal window.Įnter and submit the following command: sudo nano /lib/systemd/system/rvice In order to increase available memory for Jupyter, first of all ensure that you have a proper amount of memory in your machine. Increasing memory in Jupyter, and therefore solving the problem If you import large amounts of data (in our case, this was over 500MB), you might expect this error to occur. The cell that we executed will have no output number and no output, and when executing further cells, we will see that we lost all the variables that we created and calculated prior to that. We will see that the kernel is busy (displayed as a “full circle” in the upper right of the notebook UI, and after some time, without no response, the kernel will appear to be idle (empty circle). In case of JupyterLab, it is a bit trickier to identify. We will see a pop-up message with the following contents: “Kernel restarting: The kernel appears to have died. Jupyter Notebook (used also as JupyterHub), the problem is easier to identify.

When using the traditional Jupyter instance, i.e. In this scenario, we will rely on Jupyter’s behaviour and messages. In my experience, setting up proper logging requires some initial work during setup of the instance, and most of the times these logs are not used by us. How to identify that Jupyter is having an out-of-memory error, and the kernel is dying.īy default Jupyter does not log all executions. You may also visit our Cloud Computing Consulting Services to find out how we can help your company. Fortunately for you, we got a solution for that. This causes a lot of trouble for us: it enforces the recalculation of the whole notebook, which might take a lot of time before trying another way to perform a calculation. By dying, we mean a termination of its process, and as a result, loss of all data and variables that were calculated and stored in memory. by RAM) is large, the Jupyter Kernel will “die”. If the amount of data loaded into memory (i.e. JupyterLab (or AI Notebook) in GCP by default is set with a maximum of 3.2GB of memory. In some cases, however, this setup might cause us troubles, to be exact, in the form of out-of-memory errors. Its best advantages are easiness of setup, reproducibility and plug-and-play usage. Jupyter (in the form of JupyterLab, or AI Notebook in Google Cloud Platform) and Python (with packages like pandas and scikit-learn) is a duo that is very often used by lots of Data Scientists, Data Engineers, and Analysts. This article shows how to notice and deal with this issue. They are not so straightforward in JupyterLab to notice, and because of that it might cause a lot of trouble. Increasing memory in GCP AI Notebook JupyterLab settingsĪs a regular Jupyter user, you might encounter out-of-memory errors.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed